This material is based on Giuseppe Cerati’s presentation at the LArSoft Coordination meeting, Integration of NuGraph GNN into LArSoft which covered work by the Exa.TrkX collaboration based on the MicroBooNE open samples. The LArTPC core team consists of A. Aurisano (UCincinnati), G. Cerati (FNAL), V. Hewes (UCincinnati), J. Kowalkowski (FNAL). And on material from the NuGraph2 Workshop, specifically the presentation by V. Hewes titled, “A graph network for particle reconstruction.”

Figure 1: Exa, TrkX

Graph Neural Networks (GNNs) have been successfully used for tracking applications at the Large Hadron Collider (LHC). They can also be used for low-level LArTPC reconstruction. – Eur.Phys.J.C 81 (2021) 10, 876 • e-Print: 2103.06995

Neutrino physics sensitivity is impacted by limitations to the reconstruction:

- neutrino vertex identification

- contamination from cosmic ray background

- under-clustering of EM showers

NuGraph2, a general-purpose particle reconstruction GNN developed for use in LArTPC neutrino detectors, provides the opportunity to:

- tackle the reconstruction limitations upstream in the reconstruction

- make information available for downstream analysis

- provide a LArSoft-integrated environment for straightforward processing and data management

- enable synergies with hit-based reconstruction (e.g. Pandora)

Though originally developed within the context of the DUNE far detector, this network architecture has broad applicability, without being tied to any particular detector geometry. It is also being deployed on non-LArTPC detector technologies.

Representation of LArTPC data as a graph

LArTPC hits can be connected in a graph. A graph is a mathematical structure that represents objects and binary relationships between them. The structure can help with understanding physics data because a graph accommodates relationships beyond nearest neighbor. Graphs can also connect hits from different planes, thus making the network “3D-aware.”

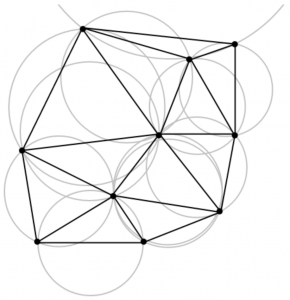

The main inputs to the GNN are the Hits. Within each plane hits are connected in a graph using Delaunay triangulation (see figure 2 below). It is a fully connected graph, with both long and short distance edges, that are able to jump across unresponsive wire regions. Hit associations to 3D SpacePoints are used to create “nexus” connections across graphs in each plane.

Delaunay triangulation

Figure 2: Delaunay triangulation https://en.wikipedia.org/wiki/Delaunay_triangulation, Nü es, CC BY-SA 3.0, http://creativecommons.org/licenses/by-sa/3.0/, via Wikimedia Commons

NuGraph2 Network Architecture

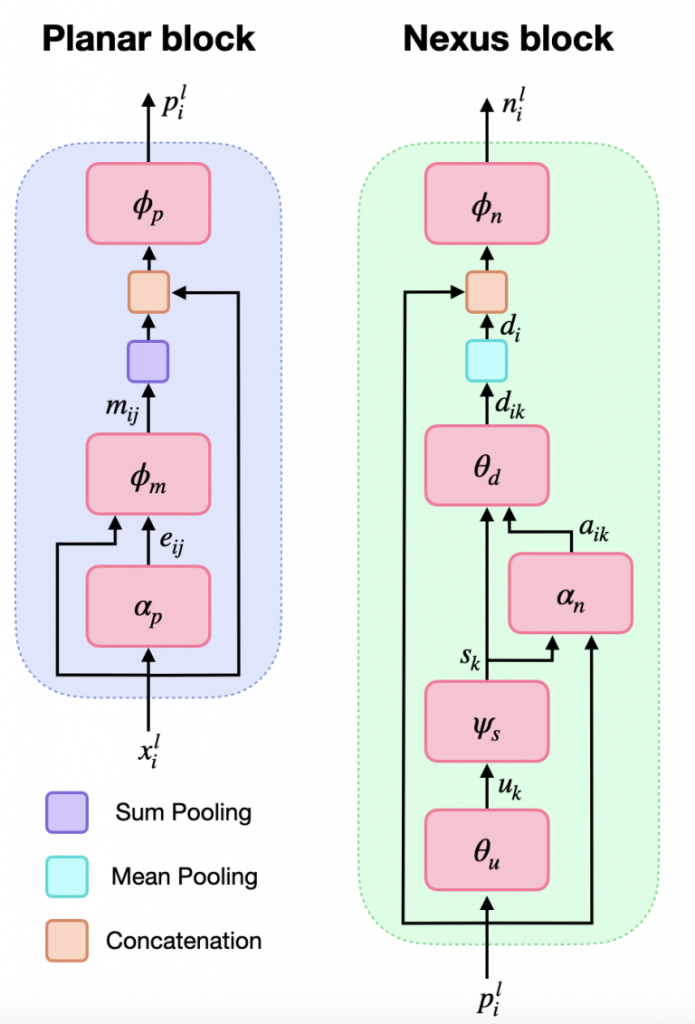

The initial application for the GNN is semantic hit classification. Categories are based on the type of particle that produced the hit, which will be described below.

NuGraph2’s core convolution engine is a self-attention message-passing network utilizing a categorical embedding. Each particle category is provided with a separate set of embedded features, which are convolved independently. Context information is exchanged between different particle types via a categorical cross-attention mechanism.

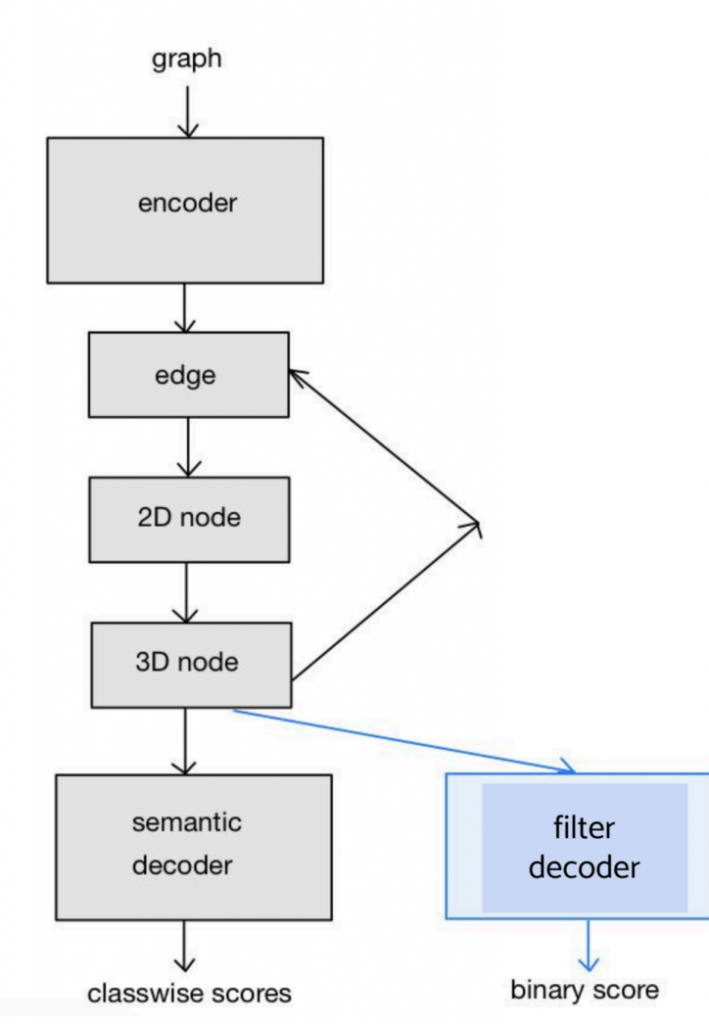

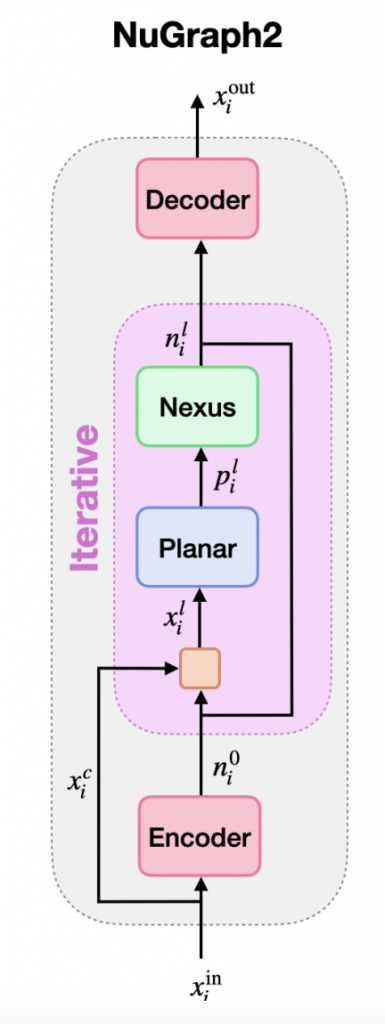

Each message-passing iteration consists of two phases, the planar step and the nexus step.

Figure 3: Two phases of message passaging

Within an iteration, messages are first passed internally in each plane, and then passed up to 3D nexus nodes to share context information.

This iterative two-step message-passing engine forms the backbone of the NuGraph2 architecture. An initial encoding step generates a learned embedding for each graph node. The output of the message-passing engine can be forwarded into any number of decoders for a variety of tasks such as semantic hit segmentation and background filtering.

This iterative two-step message-passing engine forms the backbone of the NuGraph2 architecture. An initial encoding step generates a learned embedding for each graph node. The output of the message-passing engine can be forwarded into any number of decoders for a variety of tasks such as semantic hit segmentation and background filtering.

Figure 4: Decoding

Figure 5: Semantic + Binary Decoders

Information on the NuGraph2 model integration is at the LArSoft wiki page https://larsoft.github.io/LArSoftWiki/NuGraph2_integration